GenAI Customer Support Agent

Reducing support ticket volume by 60% with a RAG-based conversational AI agent.

Role

Senior PM

Timeline

8 Months

Team

3 AI Engineers, 2 Full Stack, 1 UX Researcher

Key Insight

The turning point in this project was not identifying the problems—it was reframing how the system needed to operate in a fundamentally constrained environment.

In low-connectivity environments, a web-only system is inherently fragile. True resilience requires an offline-first approach that allows data capture and processing to happen at the edge.

The system also had to serve fundamentally different users: District Surveillance Officers needed the depth and flexibility of desktop tools for case investigation, while frontline health workers required fast, low-friction input methods like SMS.

The breakthrough was recognizing that automated logic—such as SMS-to-case provisioning—could eliminate manual handoffs entirely, removing the bottlenecks responsible for weeks of delay.

Together, these insights reframed the problem from simply digitizing reporting to redesigning the entire system around resilience, user context, and automation—laying the foundation for a solution that could operate reliably at national scale.

Vision & Strategy

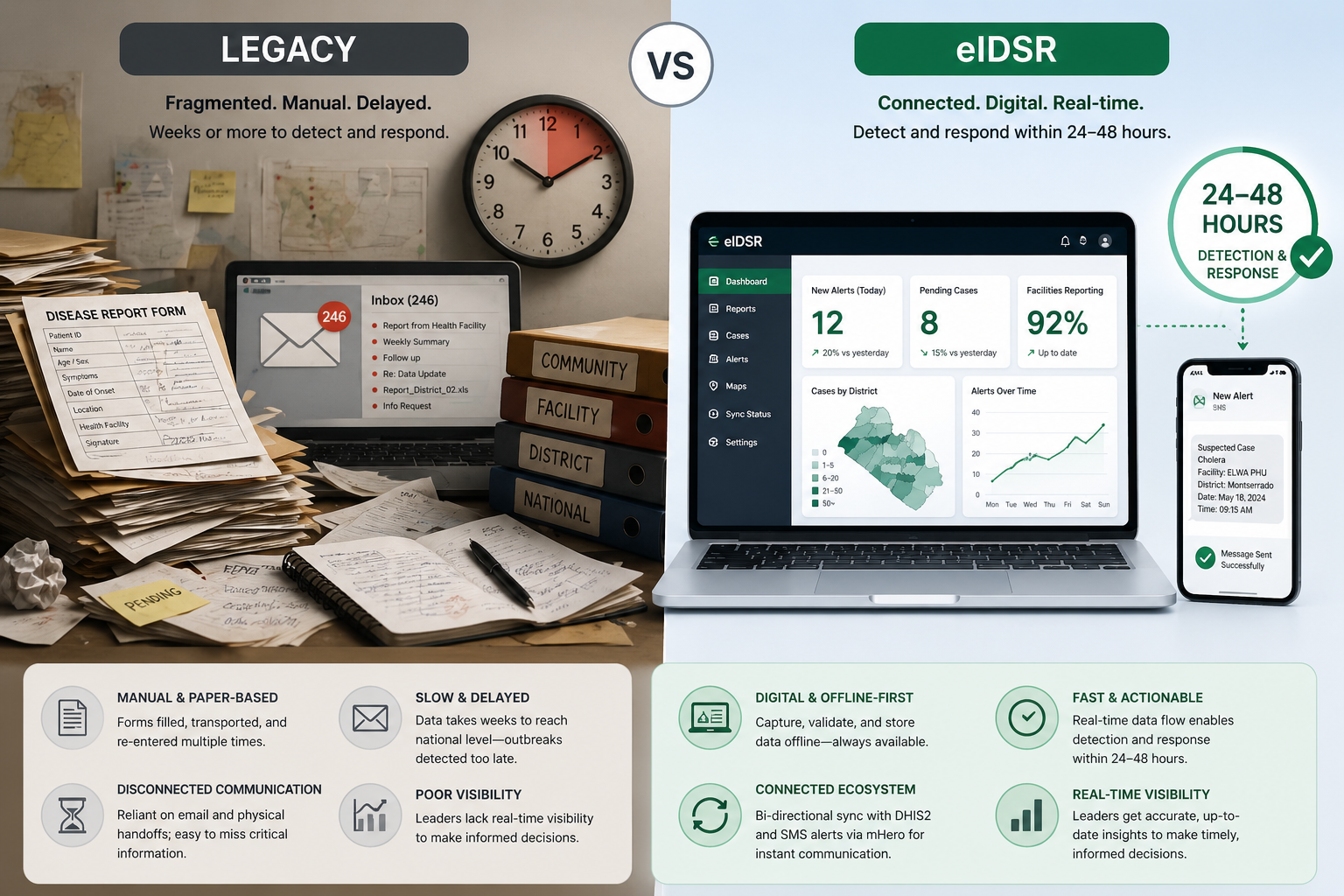

Our vision was to transform Liberia's reactive, paper-based reporting system into a proactive early-warning engine capable of delivering timely, actionable intelligence to contain infectious threats.

To achieve this, the strategy centered on an offline-first ecosystem—ensuring that data capture, validation, and access could occur reliably without dependence on continuous internet connectivity.

We implemented a standalone Windows application to guarantee full functionality for district teams regardless of connectivity. This was complemented by bidirectional synchronization with a central DHIS2 server and SMS-based alerting via mHero, enabling real-time communication from the field.

This shift redefined surveillance from delayed, reactive reporting to a resilient, real-time system capable of enabling action within 24–48 hours.

The shift from fragmented, manual reporting to a connected, digital system enabled faster data flow, improved reliability, and near real-time visibility across all levels of the health system.

This strategy provided a clear foundation for building a system that could operate reliably at scale while meeting the realities of the field.

Solution & System Design

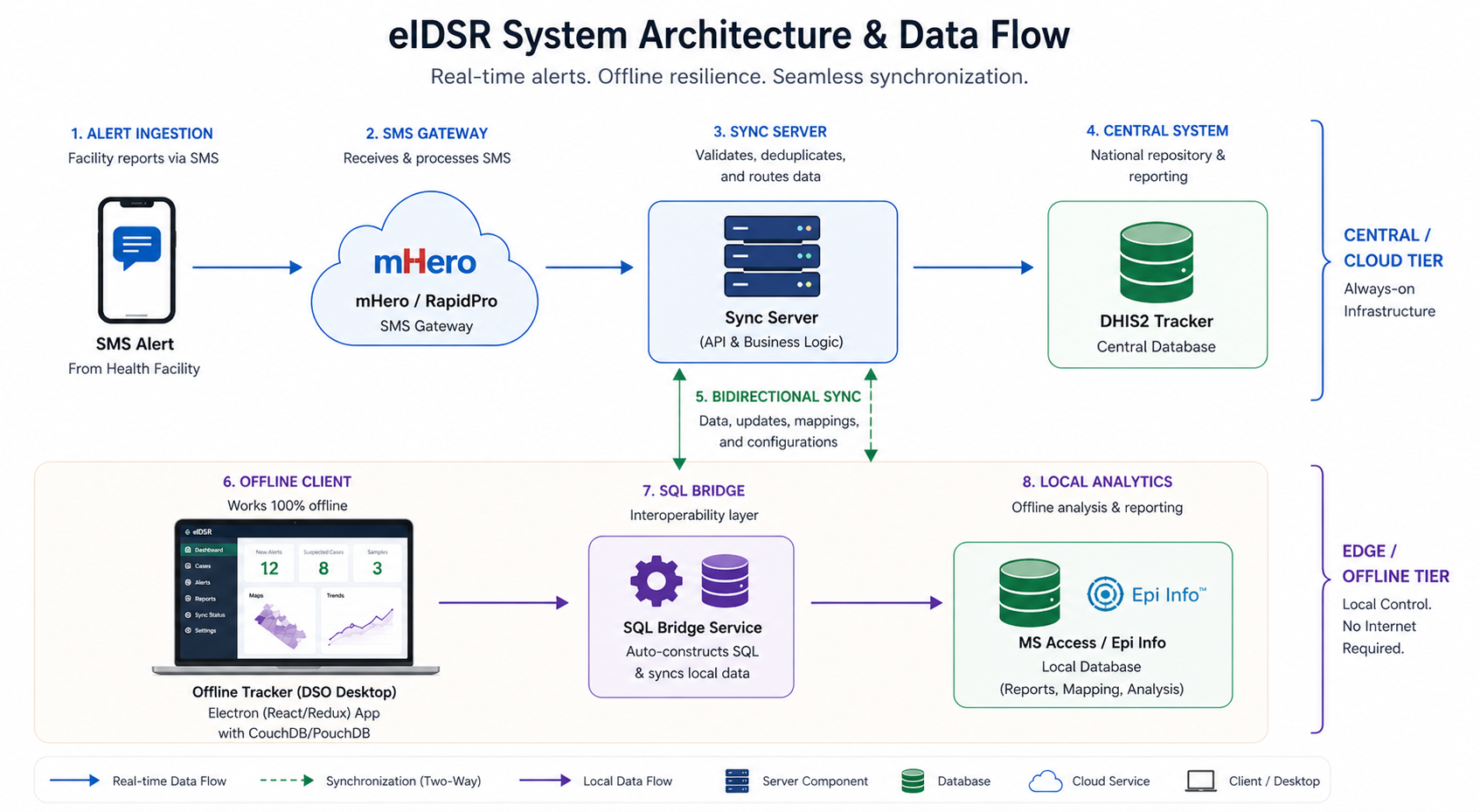

To address these challenges, we designed a multi-tier system architecture optimized for reliability in low-bandwidth and offline environments, ensuring continuous operation across all levels of the health system.

At the edge, we developed a standalone desktop application that enabled district teams to capture, validate, and manage case data entirely offline. Local data persistence ensured that all functionality remained available regardless of connectivity.

To support offline analysis, we implemented an interoperability layer that automatically populated a local database, allowing epidemiologists to perform reporting and mapping using familiar tools without relying on central servers.

The data pipeline was initiated through SMS alerts, which automatically triggered case creation and synchronized records across both local clients and the central system—eliminating manual data entry and reducing delays.

This architecture transforms fragmented reporting into a synchronized, resilient system—automating data flow from SMS alerts to local and central systems while ensuring reliable access even without connectivity.

The system enabled automated data flow from SMS alerts to both local and central systems, ensuring synchronized, reliable access to case data regardless of connectivity.

This architecture ensured that the system could operate reliably at every level while scaling to support nationwide surveillance and response.

Tradeoffs

Key product decisions required balancing usability, reliability, and security within the constraints of a low-connectivity, high-stakes environment.

Desktop vs. Mobile

While mobile is typically preferred for field deployments, we chose a desktop-based application because District Surveillance Officers were already equipped with laptops and required a more robust interface for complex clinical workflows. Connectivity was intentionally controlled via USB internet dongles, enabling offline work with automatic synchronization when available—reducing reliance on personal devices and ensuring consistent, secure data transfer.

Security vs. Friction

We enforced authentication and encrypted data handling, requiring users to log in even in offline mode. While this introduced additional friction in time-sensitive situations, it was necessary to ensure patient data confidentiality and meet national security standards.

Simplicity vs. Resilience

An offline-first architecture introduced additional complexity in synchronization and data consistency. However, this tradeoff was essential to ensure the system remained functional and reliable in environments with intermittent or no connectivity.

These tradeoffs were deliberate choices to ensure the system could operate effectively within the realities of the field while meeting critical reliability and security requirements.

Execution & Leadership

As part of the founding technical team, I led the delivery of critical system components while working closely with field users to ensure the solution operated effectively in real-world conditions.

I owned the development of the data synchronization layer and the dynamic form engine, ensuring reliable data flow between offline and central systems while improving usability for frontline users.

I led system deployment and training for over 92 health professionals—including District Surveillance Officers and laboratory staff—across two pilot counties, ensuring successful adoption and operational readiness.

Execution required rapid iteration based on field feedback. For example, we adapted SMS formatting logic after discovering that local GSM networks occasionally corrupted characters or altered message encoding, ensuring reliable data transmission.

Hands-on training sessions with District Surveillance Officers and laboratory staff ensured rapid adoption, enabling the system to be effectively used in real-world, low-resource settings.

Hands-on training sessions enabled frontline health workers to adopt the system quickly, ensuring it could be effectively used in real-world conditions from day one.

This hands-on, iterative approach ensured the system was not only built for the field, but proven in it.

Metrics & Impact

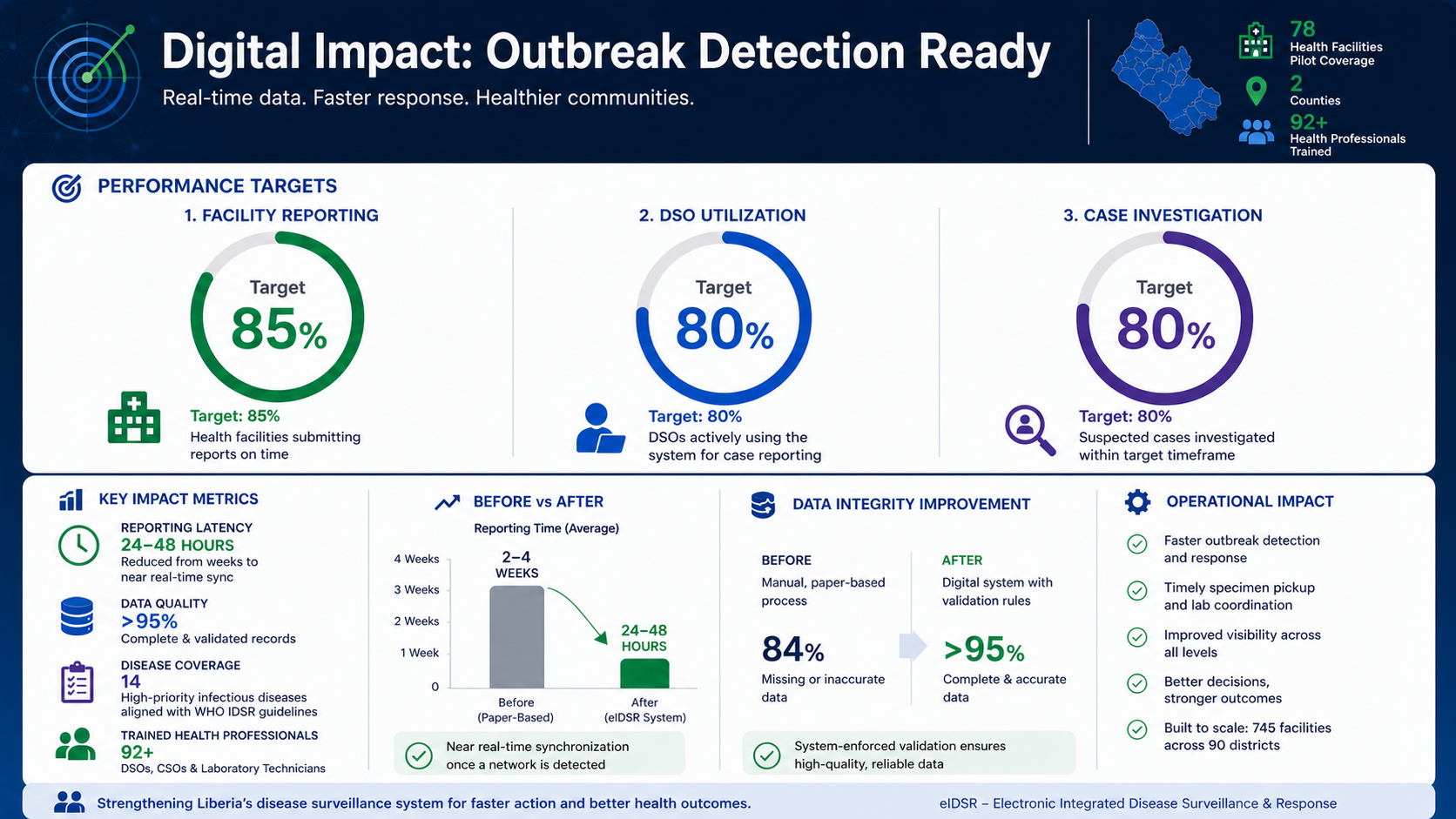

The system delivered measurable improvements in speed, data quality, and operational scale—transforming how outbreak surveillance and response were managed across the country.

- National Scale: Piloted across 78 health facilities, with architecture designed to scale to 745 facilities and 90 districts nationwide

- Latency Reduction: Reduced reporting delays from multiple weeks to near real-time synchronization when connectivity was available

- System Adoption: Trained 92+ healthcare professionals, achieving ~80% system utilization for case reporting in pilot regions

- Data Integrity: Eliminated manual data errors through enforced validation, significantly improving completeness and consistency of clinical data

- Disease Coverage: Digitized investigation workflows for 14 high-priority infectious diseases, aligning field reporting with WHO IDSR standards

- Operational Performance: Enabled system targets of 85% facility reporting and 85% investigation rates for suspected outbreaks

- Workflow Automation: Automated specimen pickup alerts and case notifications, ensuring faster coordination between surveillance teams and laboratories

By combining high adoption with faster reporting and improved data quality, the system transformed surveillance from delayed reporting to timely, actionable outbreak response.

The system reduced outbreak reporting timelines from weeks to as little as 24–48 hours, fundamentally changing the speed of national response.

Together, these improvements enabled faster detection, better coordination, and more effective containment of infectious disease outbreaks.

Lessons Learned

Building and deploying the system at national scale surfaced critical lessons about designing for constrained environments, managing data dependencies, and aligning with real-world operational needs.

Connectivity Constraints Were More Complex Than Expected

Variability across GSM networks meant that SMS delivery could be inconsistent, reinforcing that offline-first design is not a feature—but a foundational requirement for reliability.

Data Quality Depends on Strong Master Data Governance

The system's effectiveness depended heavily on the quality of external data sources. Incomplete or outdated registries disrupted automated workflows, highlighting the need for strong master data governance alongside flexible system design.

Design for Cognitive Load in High-Pressure Environments

Designing for high-pressure environments required minimizing cognitive load while enforcing data quality. Conditional workflows simplified data entry, while strict validation ensured that only complete, actionable data reached decision-makers.

Bridge Global Standards with Local Reality

Aligning global reporting standards with local operational realities was critical. Success required bridging the gap between international requirements and the practical constraints faced by frontline health workers.

These lessons continue to shape how I approach building resilient, user-centered systems in complex and resource-constrained environments.